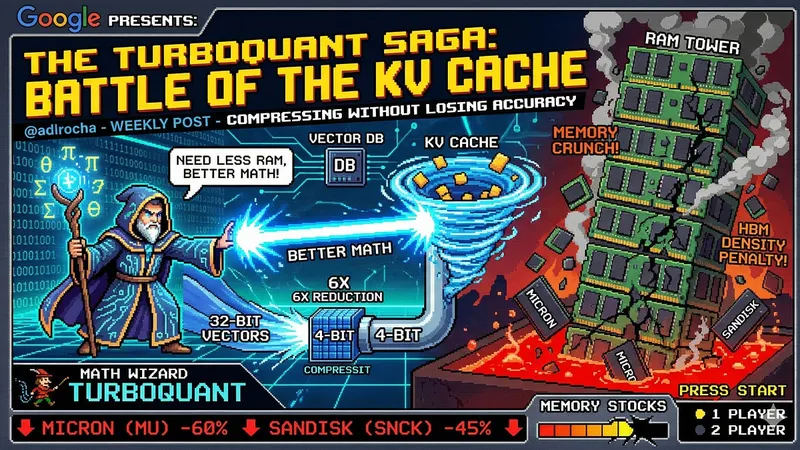

What if AI doesn't need more RAM but better math?

adlrocha.substack.com

March 29, 2026

10 min read

🔥🔥🔥🔥🔥

57/100

Summary

TurboQuant compresses the KV cache in AI applications, improving efficiency without sacrificing accuracy. This innovation addresses the challenges of HBM density penalties and DRAM price pressures in the AI memory landscape.

Key Takeaways

- Google introduced TurboQuant, an algorithm that compresses the key-value (KV) cache in AI models without sacrificing accuracy.

- The KV cache, which stores query, key, and value vectors for each token, can consume more GPU memory than the model weights in long contexts.

- Reducing the memory requirements of the KV cache could alleviate bottlenecks in production inference for AI models, enabling support for longer contexts and more simultaneous users.

- TurboQuant's approach suggests that improving mathematical efficiency in AI may be more beneficial than simply increasing hardware memory capacity.

Community Sentiment

Positives

- Exploring alternative mathematical approaches could lead to significant advancements in AI efficiency, potentially reducing reliance on massive memory resources.

- Optimizations like extreme quantization and KANs suggest that improving computational methods can yield better performance without simply increasing resource demands.

Concerns

- The demand for memory is unlikely to decrease, as AI companies will continue to seek unlimited resources regardless of improvements in mathematical techniques.

- Current RAM shortages highlight the ongoing struggle between hardware costs and the need for advanced mathematical solutions in AI development.

Related Articles

TurboQuant: Redefining AI efficiency with extreme compression

Mar 25, 2026

TurboQuant: A first-principles walkthrough

Apr 27, 2026

LLM Neuroanatomy II: Modern LLM Hacking and Hints of a Universal Language?

Mar 24, 2026

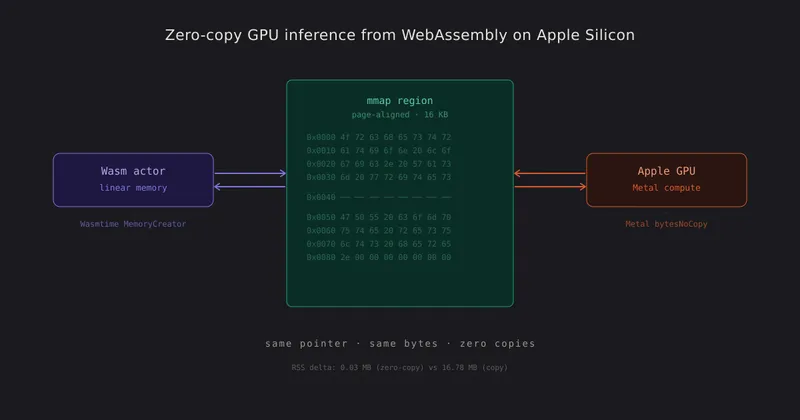

Zero-Copy GPU Inference from WebAssembly on Apple Silicon

Apr 18, 2026

![[AINews] Why OpenAI Should Build Slack](https://substackcdn.com/image/fetch/$s_!XQAE!,w_1200,h_675,c_fill,f_jpg,q_auto:good,fl_progressive:steep,g_auto/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F89ee056a-0ea2-4473-8e1c-9b21f034c717_1474x2116.png)

OpenAI should build Slack

Feb 14, 2026