Reinforcement Learning from Human Feedback

arxiv.org

February 7, 2026

2 min read

Summary

Reinforcement learning from human feedback (RLHF) is a key technique for deploying advanced machine learning systems. A new book provides an introduction to the core methods of RLHF for readers with a quantitative background.

Key Takeaways

- Reinforcement learning from human feedback (RLHF) is a critical tool for deploying advanced machine learning systems.

- The book covers the origins of RLHF, including its connections to economics, philosophy, and optimal control.

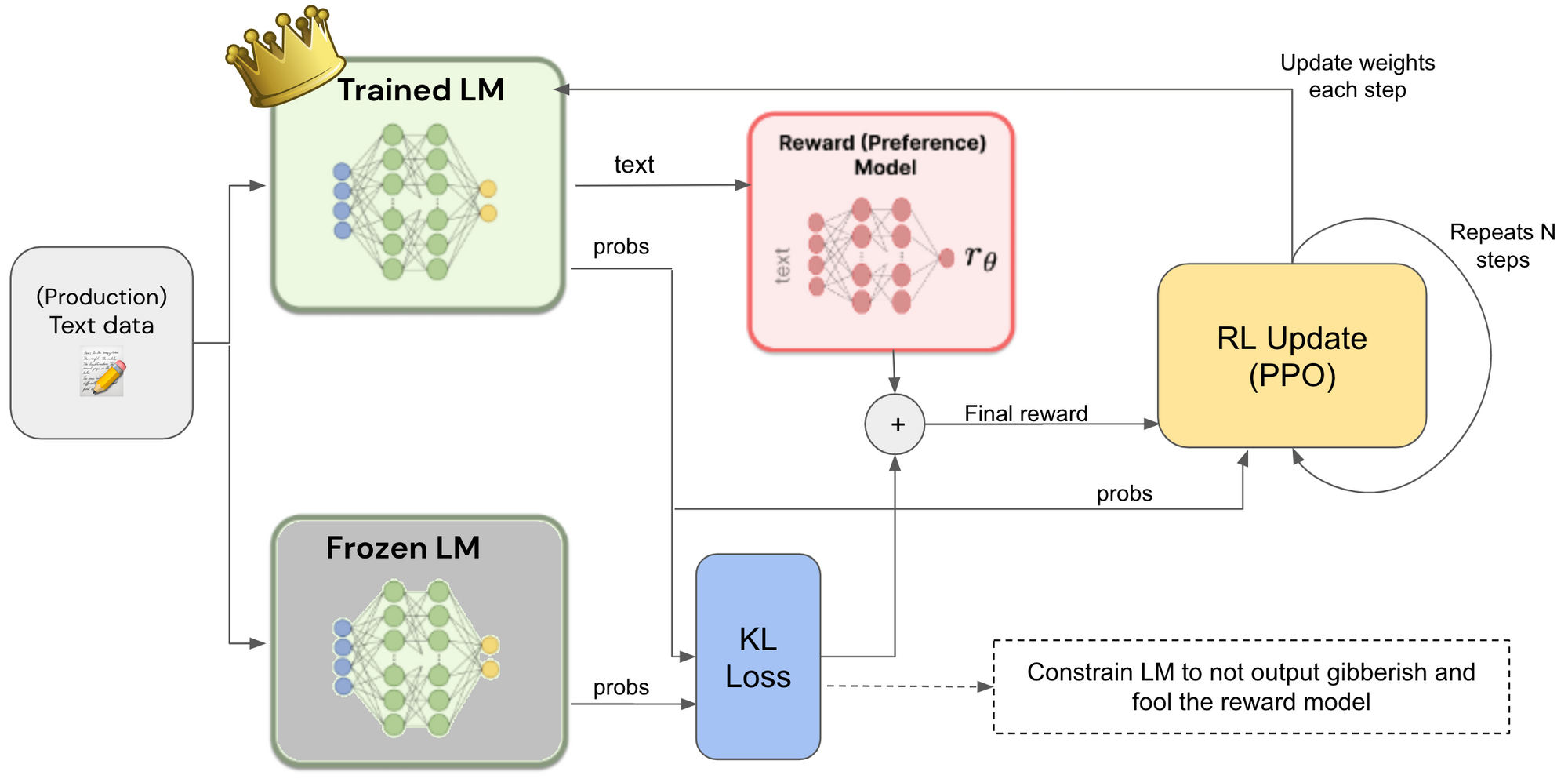

- It details the optimization stages of RLHF, including instruction tuning, reward model training, and various algorithms for alignment.

- The book concludes with discussions on advanced topics such as synthetic data, evaluation, and open research questions in the field.

Source

arxiv.org

Published

February 7, 2026

Reading Time

2 minutes

Relevance Score

53/100

🔥🔥🔥🔥🔥

Why It Matters

This page is optimized for focused reading: quick context up top, a clean summary block, and a direct path to the original source when you want the full story.