Running local models on an M4 with 24GB memory

jola.dev

May 10, 2026

8 min read

🔥🔥🔥🔥🔥

59/100

Summary

Local models can be run on an M4 with 24GB of memory, allowing for basic tasks such as research and planning without an internet connection. This setup reduces dependence on major tech companies while providing a functional alternative to state-of-the-art models.

Key Takeaways

- The Qwen 3.5-9B model achieves approximately 40 tokens per second with successful tool use and a 128K context window when run on LM Studio with a 24GB MacBook Pro.

- Setting up local models requires selecting from various platforms like Ollama, llama.cpp, or LM Studio, each with unique quirks and limitations.

- Recommended settings for precise coding tasks in thinking mode include a temperature of 0.6, top_p of 0.95, and top_k of 20.

- Local models, while not as capable as state-of-the-art models, can perform basic tasks and reduce dependence on major tech companies.

Community Sentiment

Positives

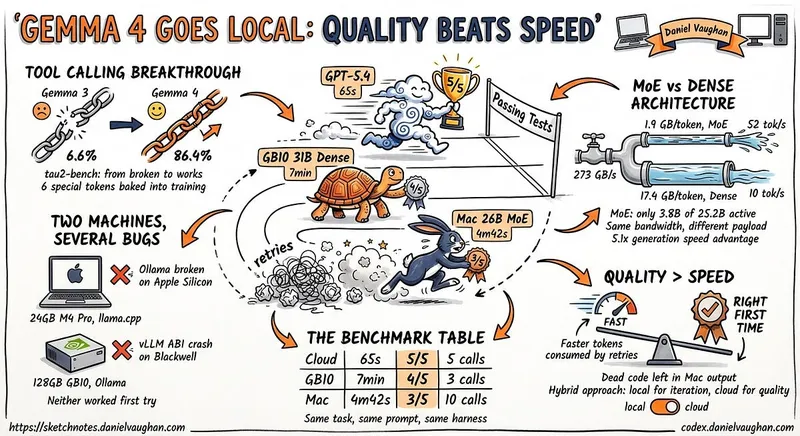

- Recent models like Qwen 3.6 and Gemma show significant improvements in local coding capabilities, suggesting a shift towards more practical applications for developers.

- Gemma 4 has established a new baseline for local models, providing a more reliable experience compared to earlier versions, which were often experimental.

- Users report that larger models can effectively handle complex tasks when provided with adequate context, indicating that local models are becoming more competitive with cloud-based solutions.

Concerns

- Many users find that local models, especially smaller ones like the 9B version, struggle with larger problems and are often barely functional for serious development tasks.

- There is a prevailing sentiment that local models are overstated in their capabilities compared to frontier models like Opus 4.7, leading to unrealistic expectations among some users.

- The difficulty in obtaining high-spec machines limits the usability of local models, as many users believe that more RAM is essential for meaningful work.